Introducing pylint-django 2.0

Today I have released pylint-django version 2.0 on PyPI. The changes are centered around compatibility with the latest pylint 2.0 and astroid 2.0 versions. I've also bumped pylint-django's version number to reflact that.

A major component, class transformations, was updated so don't be surprised if there are bugs. All the existing test cases pass but you never know what sort of edge case there could be.

I'm also hosting a workshop/corporate training about writing pylint plugins. If you are interested see this page!

Thanks for reading and happy testing!

There are comments.

Introducing pylint-django 0.8.0

Since my previous post was about writing pylint plugins I figured I'd let you know that I've released pylint-django version 0.8.0 over the weekend. This release merges all pull requests which were pending till now so make sure to read the change log.

Starting with this release Colin Howe and myself are the new maintainers of this package. My immediate goal is to triage all of the open issue and figure out if they still reproduce. If yes try to come up with fixes for them or at least get the conversation going again.

My next goal is to integrate pylint-django with Kiwi TCMS and start resolving all the 4000+ errors and warnings that it produces.

You are welcome to contribute of course. I'm also interested in hosting a workshop on the topic of pylint plugins.

Thanks for reading and happy testing!

There are comments.

How to write pylint checker plugins

In this post I will walk you through the process of learning how to write additional checkers for pylint!

Prerequisites

-

Read Contributing to pylint to get basic knowledge of how to execute the test suite and how it is structured. Basically call

tox -e py36. Verify that all tests PASS locally! -

Read pylint's How To Guides, in particular the section about writing a new checker. A plugin is usually a Python module that registers a new checker.

-

Most of pylint checkers are AST based, meaning they operate on the abstract syntax tree of the source code. You will have to familiarize yourself with the AST node reference for the

astroidandastmodules. Pylint uses Astroid for parsing and augmenting the AST.NOTE: there is compact and excellent documentation provided by the Green Tree Snakes project. I would recommend the Meet the Nodes chapter.

Astroid also provides exhaustive documentation and node API reference.

WARNING: sometimes Astroid node class names don't match the ones from ast!

-

Your interactive shell weapons are

ast.dump(),ast.parse(),astroid.parse()andastroid.extract_node(). I use them inside an interactive Python shell to figure out how a piece of source code is parsed and converted back to AST nodes! You can also try this ast node pretty printer! I personally haven't used it.

How pylint processes the AST tree

Every checker class may include special methods with names

visit_xxx(self, node) and leave_xxx(self, node) where xxx is the lowercase

name of the node class (as defined by astroid). These methods are executed

automatically when the parser iterates over nodes of the respective type.

All of the magic happens inside such methods. They are responsible for collecting information about the context of specific statements or patterns that you wish to detect. The hard part is figuring out how to collect all the information you need because sometimes it can be spread across nodes of several different types (e.g. more complex code patterns).

There is a special decorator called @utils.check_messages. You have to list

all message ids that your visit_ or leave_ method will generate!

How to select message codes and IDs

One of the most unclear things for me is message codes. pylint docs say

The message-id should be a 5-digit number, prefixed with a message category. There are multiple message categories, these being

C,W,E,F,R, standing forConvention,Warning,Error,FatalandRefactoring. The rest of the 5 digits should not conflict with existing checkers and they should be consistent across the checker. For instance, the first two digits should not be different across the checker.

I'm usually having troubles with the numbering part so you will have to get creative or look at existing checker codes.

Practical example

In Kiwi TCMS there's legacy code that looks like this:

def add_cases(run_ids, case_ids):

trs = TestRun.objects.filter(run_id__in=pre_process_ids(run_ids))

tcs = TestCase.objects.filter(case_id__in=pre_process_ids(case_ids))

for tr in trs.iterator():

for tc in tcs.iterator():

tr.add_case_run(case=tc)

return

Notice the dangling return statement at the end! It is useless because when missing

the default return value of this function will still be None. So I've decided to

create a plugin for that.

Armed with the knowledge above I first try the ast parser in the console:

Python 3.6.3 (default, Oct 5 2017, 20:27:50)

[GCC 4.8.5 20150623 (Red Hat 4.8.5-11)] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import ast

>>> import astroid

>>> ast.dump(ast.parse('def func():\n return'))

"Module(body=[FunctionDef(name='func', args=arguments(args=[], vararg=None, kwonlyargs=[], kw_defaults=[], kwarg=None, defaults=[]), body=[Return(value=None)], decorator_list=[], returns=None)])"

>>>

>>>

>>> node = astroid.parse('def func():\n return')

>>> node

<Module l.0 at 0x7f5b04621b38>

>>> node.body

[<FunctionDef.func l.1 at 0x7f5b046219e8>]

>>> node.body[0]

<FunctionDef.func l.1 at 0x7f5b046219e8>

>>> node.body[0].body

[<Return l.2 at 0x7f5b04621c18>]

As you can see there is a FunctionDef node representing the function and it has

a body attribute which is a list of all statements inside the function. The last

element is .body[-1] and it is of type Return! The Return node also has an

attribute called .value which is the return value! The complete code will look

like this:

import astroid

from pylint import checkers

from pylint import interfaces

from pylint.checkers import utils

class UselessReturnChecker(checkers.BaseChecker):

__implements__ = interfaces.IAstroidChecker

name = 'useless-return'

msgs = {

'R2119': ("Useless return at end of function or method",

'useless-return',

'Emitted when a bare return statement is found at the end of '

'function or method definition'

),

}

@utils.check_messages('useless-return')

def visit_functiondef(self, node):

"""

Checks for presence of return statement at the end of a function

"return" or "return None" are useless because None is the default

return type if they are missing

"""

# if the function has empty body then return

if not node.body:

return

last = node.body[-1]

if isinstance(last, astroid.Return):

# e.g. "return"

if last.value is None:

self.add_message('useless-return', node=node)

# e.g. "return None"

elif isinstance(last.value, astroid.Const) and (last.value.value is None):

self.add_message('useless-return', node=node)

def register(linter):

"""required method to auto register this checker"""

linter.register_checker(UselessReturnChecker(linter))

Here's how to execute the new plugin:

$ PYTHONPATH=./myplugins pylint --load-plugins=uselessreturn tcms/xmlrpc/api/testrun.py | grep useless-return

W: 40, 0: Useless return at end of function or method (useless-return)

W:117, 0: Useless return at end of function or method (useless-return)

W:242, 0: Useless return at end of function or method (useless-return)

W:495, 0: Useless return at end of function or method (useless-return)

NOTES:

-

If you contribute this code upstream and pylint releases it you will get a traceback:

pylint.exceptions.InvalidMessageError: Message symbol 'useless-return' is already definedthis means your checker has been released in the latest version and you can drop the custom plugin!

-

This is example is fairly simple because the AST tree provides the information we need in a very handy way. Take a look at some of my other checkers to get a feeling of what a more complex checker looks like!

-

Write and run tests for your new checkers, especially if contributing upstream. Have in mind that the new checker will be executed against existing code and in combination with other checkers which could lead to some interesting results. I will leave the testing to yourself, all is written in the documentation.

This particular example I've contributed as PR #1821 which happened to contradict an existing checker. The update, raising warnings only when there's a single return statement in the function body, is PR #1823.

Workshop around the corner

I will be working together with HackSoft on an in-house workshop/training for writing pylint plugins. I'm also looking at reviving pylint-django so we can write more plugins specifically for Django based projects.

If you are interested in workshop and training on the topic let me know!

Thanks for reading and happy testing!

There are comments.

On Pytest-django and LiveServerTestCase with initial data

While working on Kiwi TCMS I've had the opportunity to learn in-depth about how the standard test case classes in Django work. This is a quick post about creating initial data and order of execution!

Initial test data for TransactionTestCase or LiveServerTestCase

class LiveServerTestCase(TransactionTestCase), as the name suggests, provides a running

Django instance during testing. We use that for Kiwi's XML-RPC API tests, issuing

http requests against the live server instance and examining the responses!

For testing to work we also need some initial data. There are few key items

that need to be taken into account to accomplish that:

self._fixture_teardown()- performs./manage.py flushwhich deletes all records from the database, including the ones created during initial migrations;self.serialized_rollback- when set to True will serialize initial records from the database into a string and then load this back. Required if subsequent tests need to have access to the records created during migrations!cls.setUpTestDatais an attribute ofclass TestCase(TransactionTestCase)and hence can't be used to create records before any transaction based test case is executed.self._fixture_setup()is where the serialized rollback happens, thus it can be used to create initial data for your tests!

In Kiwi TCMS all XML-RPC test classes have serialized_rollback = True and

implement a _fixture_setup() method instead of setUpTestData() to create the

necessary records before testing!

NOTE: you can also use fixtures in the above scenario but I don't like using them and we've deleted all fixtures from Kiwi TCMS a long time ago so I didn't feel like going back to that!

Order of test execution

From Django's docs:

In order to guarantee that all TestCase code starts with a clean database, the Django test runner reorders tests in the following way:

- All TestCase subclasses are run first.

- Then, all other Django-based tests (test cases based on SimpleTestCase, including TransactionTestCase) are run with no particular ordering guaranteed nor enforced among them.

- Then any other unittest.TestCase tests (including doctests) that may alter the database without restoring it to its original state are run.

This is not of much concern most of the time but becomes important when you decide

to mix and match transaction and non-transaction based tests into one test suite.

As seen in Job #471.1

tcms/xmlrpc/tests/test_serializer.py tests errored out! If you execute these tests

standalone they all pass! The root cause is that these serializer tests are based on

Django's test.TestCase class and are executed after a test.LiveServerTestCase class!

The tests in tcms/xmlrpc/tests/test_product.py will flush the database, removing all

records, including the ones from initial migrations. Then when test_serializer.py is

executed it will call its factories which in turn rely on initial records being available

and produces an error because these records have been deleted!

The reason for this is that pytest doesn't respect the order of execution for Django tests! As seen in the build log above tests are executed in the order in which they were discovered! My solution was not to use pytest (I don't need it for anything else)!

At the moment I'm dealing with strange errors/segmentation faults when running Kiwi's tests under Django 2.0. It looks like the http response has been closed before the client side tries to read it. Why this happens I have not been able to figure out yet. Expect another blog post when I do.

Thanks for reading and happy testing!

There are comments.

TransactionManagementError during testing with Django 1.10

During the past 3 weeks I've been debugging a weird error which started happening after I migrated KiwiTestPad to Django 1.10.7. Here is the reason why this happened.

Symptoms

After migrating to Django 1.10 all tests appeared to be working locally on SQLite however they failed on MySQL with

TransactionManagementError: An error occurred in the current transaction. You can't execute queries until the end of the 'atomic' block.

The exact same test cases failed on PostgreSQL with:

InterfaceError: connection already closed

Since version 1.10 Django executes all tests inside transactions so my first thoughts were related to the auto-commit mode. However upon closer inspection we can see that the line which triggers the failure is

self.assertTrue(users.exists())

which is essentially a SELECT query aka

User.objects.filter(username=username).exists()!

My tests were failing on a SELECT query!

Reading the numerous posts about TransactionManagementError I discovered it may

be caused by a run-away cursor. The application did use raw SQL statements which

I've converted promptly to ORM queries, that took me some time. Then I also fixed

a couple of places where it used transaction.atomic() as well. No luck!

Then, after numerous experiments and tons of logging inside Django's own code I was able to figure out when the failure occurred and what events were in place. The test code looked like this:

response = self.client.get('/confirm/')

user = User.objects.get(username=self.new_user.username)

self.assertTrue(user.is_active)

The failure was happening after the view had been rendered upon the first time I do a SELECT against the database!

The problem was that the connection to the database had been closed midway during the transaction!

In particular (after more debugging of course) the sequence of events was:

- execute

django/test/client.py::Client::get() - execute

django/test/client.py::ClientHandler::__call__(), which takes care to disconnect/connectsignals.request_startedandsignals.request_finishedwhich are responsible for tearing down the DB connection, so problem not here - execute

django/core/handlers/base.py::BaseHandler::get_response() - execute

django/core/handlers/base.py::BaseHandler::_get_response()which goes through the middleware (needless to say I did inspect all of it as well since there have been some changes in Django 1.10) - execute

response = wrapped_callback()while still insideBaseHandler._get_response() -

execute

django/http/response.py::HttpResponseBase::close()which looks like# These methods partially implement the file-like object interface. # See https://docs.python.org/3/library/io.html#io.IOBase # The WSGI server must call this method upon completion of the request. # See http://blog.dscpl.com.au/2012/10/obligations-for-calling-close-on.html def close(self): for closable in self._closable_objects: try: closable.close() except Exception: pass self.closed = True signals.request_finished.send(sender=self._handler_class) -

signals.request_finishedis fired django/db/__init__.py::close_old_connections()closes the connection!

IMPORTANT: On MySQL setting AUTO_COMMIT=False and CONN_MAX_AGE=None helps

workaround this problem but is not the solution for me because it didn't help on

PostgreSQL.

Going back to HttpResponseBase::close() I started wondering who calls this method.

The answer was it was getting called by the @content.setter method at

django/http/response.py::HttpResponse::content() which is even more weird because

we assign to self.content inside HttpResponse::__init__()

Root cause

The root cause of my problem was precisely this HttpResponse::__init__() method

or rather the way we arrive at it inside the application.

The offending view last line was

return HttpResponse(Prompt.render(

request=request,

info_type=Prompt.Info,

info=msg,

next=request.GET.get('next', reverse('core-views-index'))

))

and the Prompt class looks like this

from django.shortcuts import render

class Prompt(object):

@classmethod

def render(cls, request, info_type=None, info=None, next=None):

return render(request, 'prompt.html', {

'type': info_type,

'info': info,

'next': next

})

Looking back at the internals of HttpResponse we see that

- if content is a string we call

self.make_bytes() - if the content is an iterator then we assign it and if the object has a close method then it is executed.

HttpResponse itself is an iterator, inherits from six.Iterator so when we initialize

HttpResponse with another HttpResponse object (aka the content) we execute content.close()

which unfortunately happens to close the database connection as well.

IMPORTANT: note that from the point of view of a person using the application the

HTML content is exactly the same regardless of whether we have nested HttpResponse objects

or not.

Also during normal execution the code doesn't run inside a transaction so we never notice

the problem in production.

The fix of course is very simple, just return Prompt.render()!

Thanks for reading and happy testing!

There are comments.

Automatic Upstream Dependency Testing

Ever since RHEL 7.2 python-libs broke s3cmd I've been pondering an age old problem: How do I know if my software works with the latest upstream dependencies ? How can I pro-actively monitor for new versions and add them to my test matrix ?

Mixing together my previous experience with Difio and monitoring upstream sources, and Forbes Lindesay's GitHub Automation talk at DEVit Conf I came together with a plan:

- Make an application which will execute when new upstream version is available;

- Automatically update

.travis.ymlfor the projects I'm interested in; - Let Travis-CI execute my test suite for all available upstream versions;

- Profit!

How Does It Work

First we need to monitor upstream! RubyGems.org has nice webhooks interface, you can even trigger on individual packages. PyPI however doesn't have anything like this :(. My solution is to run a cron job every hour and parse their RSS stream for newly released packages. This has been working previously for Difio so I re-used one function from the code.

After finding anything we're interested in comes the hard part - automatically

updating .travis.yml using the GitHub API. I've described this in more detail

here. This time

I've slightly modified the code to update only when needed and accept more

parameters so it can be reused.

Travis-CI has a clean interface to specify environment variables and

defining several

of them crates a test matrix. This is exactly what I'm doing.

.travis.yml is updated with a new ENV setting, which determines the upstream

package version. After commit new build is triggered which includes the expanded

test matrix.

Example

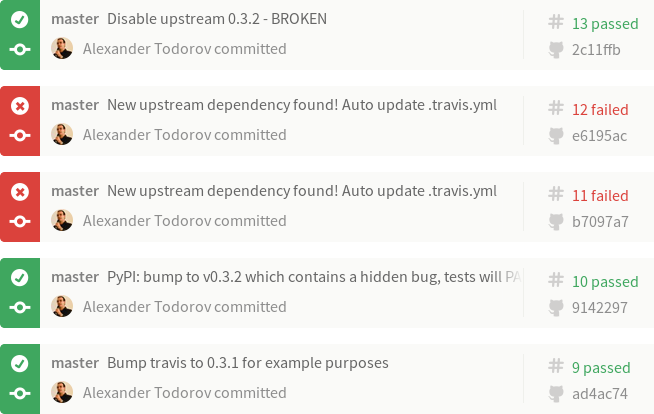

Imagine that our Project 2501 depends on FOO version 0.3.1. The build log illustrates what happened:

- Build #9 is what we've tested with FOO-0.3.1 and released to production. Test result is PASS!

- Build #10 - meanwhile upstream releases FOO-0.3.2 which causes our project to break. We're not aware of this and continue developing new features while all test results still PASS! When our customers upgrade their systems Project 2501 will break ! Tests didn't catch it because test matrix wasn't updated. Please ignore the actual commit message in the example! I've used the same repository for the dummy dependency package.

- Build #11 - the monitoring solution finds FOO-0.3.2 and updates the test matrix automatically. The build immediately breaks! More precisely the test with version 0.3.2 fails!

- Build #12 - we've alerted FOO.org about their problem and they've released FOO-0.3.3. Our monitor has found that and updated the test matrix. However the 0.3.2 test job still fails!

- Build #13 - we decide to workaround the 0.3.2 failure or simply handle the

error gracefully. In this example I've removed version 0.3.2 from the test

matrix to simulate that. In reality I wouldn't touch

.travis.ymlbut instead update my application and tests to check for that particular version. All test results are PASS again!

Btw Build #11 above was triggered manually (./monitor.py) while Build #12 came from OpenShit, my hosting environment.

At present I have this monitoring enabled for my new Markdown extensions and will also add it to django-s3-cache once it migrates to Travis-CI (it uses drone.io now).

Enough Talk, Show me the Code

#!/usr/bin/env python

import os

import sys

import json

import base64

import httplib

from pprint import pprint

from datetime import datetime

from xml.dom.minidom import parseString

def get_url(url, post_data = None):

# GitHub requires a valid UA string

headers = {

'User-Agent' : 'Mozilla/5.0 (X11; Linux x86_64; rv:10.0.5) Gecko/20120601 Firefox/10.0.5',

}

# shortcut for GitHub API calls

if url.find("://") == -1:

url = "https://api.github.com%s" % url

if url.find('api.github.com') > -1:

if not os.environ.has_key("GITHUB_TOKEN"):

raise Exception("Set the GITHUB_TOKEN variable")

else:

headers.update({

'Authorization': 'token %s' % os.environ['GITHUB_TOKEN']

})

(proto, host_path) = url.split('//')

(host_port, path) = host_path.split('/', 1)

path = '/' + path

if url.startswith('https'):

conn = httplib.HTTPSConnection(host_port)

else:

conn = httplib.HTTPConnection(host_port)

method = 'GET'

if post_data:

method = 'POST'

post_data = json.dumps(post_data)

conn.request(method, path, body=post_data, headers=headers)

response = conn.getresponse()

if (response.status == 404):

raise Exception("404 - %s not found" % url)

result = response.read().decode('UTF-8', 'replace')

try:

return json.loads(result)

except ValueError:

# not a JSON response

return result

def post_url(url, data):

return get_url(url, data)

def monitor_rss(config):

"""

Scan the PyPI RSS feeds to look for new packages.

If name is found in config then execute the specified callback.

@config is a dict with keys matching package names and values

are lists of dicts

{

'cb' : a_callback,

'args' : dict

}

"""

rss = get_url("https://pypi.python.org/pypi?:action=rss")

dom = parseString(rss)

for item in dom.getElementsByTagName("item"):

try:

title = item.getElementsByTagName("title")[0]

pub_date = item.getElementsByTagName("pubDate")[0]

(name, version) = title.firstChild.wholeText.split(" ")

released_on = datetime.strptime(pub_date.firstChild.wholeText, '%d %b %Y %H:%M:%S GMT')

if name in config.keys():

print name, version, "found in config"

for cfg in config[name]:

try:

args = cfg['args']

args.update({

'name' : name,

'version' : version,

'released_on' : released_on

})

# execute the call back

cfg['cb'](**args)

except Exception, e:

print e

continue

except Exception, e:

print e

continue

def update_travis(data, new_version):

travis = data.rstrip()

new_ver_line = " - VERSION=%s" % new_version

if travis.find(new_ver_line) == -1:

travis += "\n" + new_ver_line + "\n"

return travis

def update_github(**kwargs):

"""

Update GitHub via API

"""

GITHUB_REPO = kwargs.get('GITHUB_REPO')

GITHUB_BRANCH = kwargs.get('GITHUB_BRANCH')

GITHUB_FILE = kwargs.get('GITHUB_FILE')

# step 1: Get a reference to HEAD

data = get_url("/repos/%s/git/refs/heads/%s" % (GITHUB_REPO, GITHUB_BRANCH))

HEAD = {

'sha' : data['object']['sha'],

'url' : data['object']['url'],

}

# step 2: Grab the commit that HEAD points to

data = get_url(HEAD['url'])

# remove what we don't need for clarity

for key in data.keys():

if key not in ['sha', 'tree']:

del data[key]

HEAD['commit'] = data

# step 4: Get a hold of the tree that the commit points to

data = get_url(HEAD['commit']['tree']['url'])

HEAD['tree'] = { 'sha' : data['sha'] }

# intermediate step: get the latest content from GitHub and make an updated version

for obj in data['tree']:

if obj['path'] == GITHUB_FILE:

data = get_url(obj['url']) # get the blob from the tree

data = base64.b64decode(data['content'])

break

old_travis = data.rstrip()

new_travis = update_travis(old_travis, kwargs.get('version'))

# bail out if nothing changed

if new_travis == old_travis:

print "new == old, bailing out", kwargs

return

####

#### WARNING WRITE OPERATIONS BELOW

####

# step 3: Post your new file to the server

data = post_url(

"/repos/%s/git/blobs" % GITHUB_REPO,

{

'content' : new_travis,

'encoding' : 'utf-8'

}

)

HEAD['UPDATE'] = { 'sha' : data['sha'] }

# step 5: Create a tree containing your new file

data = post_url(

"/repos/%s/git/trees" % GITHUB_REPO,

{

"base_tree": HEAD['tree']['sha'],

"tree": [{

"path": GITHUB_FILE,

"mode": "100644",

"type": "blob",

"sha": HEAD['UPDATE']['sha']

}]

}

)

HEAD['UPDATE']['tree'] = { 'sha' : data['sha'] }

# step 6: Create a new commit

data = post_url(

"/repos/%s/git/commits" % GITHUB_REPO,

{

"message": "New upstream dependency found! Auto update .travis.yml",

"parents": [HEAD['commit']['sha']],

"tree": HEAD['UPDATE']['tree']['sha']

}

)

HEAD['UPDATE']['commit'] = { 'sha' : data['sha'] }

# step 7: Update HEAD, but don't force it!

data = post_url(

"/repos/%s/git/refs/heads/%s" % (GITHUB_REPO, GITHUB_BRANCH),

{

"sha": HEAD['UPDATE']['commit']['sha']

}

)

if data.has_key('object'): # PASS

pass

else: # FAIL

print data['message']

if __name__ == "__main__":

config = {

"atodorov-test" : [

{

'cb' : update_github,

'args': {

'GITHUB_REPO' : 'atodorov/bztest',

'GITHUB_BRANCH' : 'master',

'GITHUB_FILE' : '.travis.yml'

}

}

],

"Markdown" : [

{

'cb' : update_github,

'args': {

'GITHUB_REPO' : 'atodorov/Markdown-Bugzilla-Extension',

'GITHUB_BRANCH' : 'master',

'GITHUB_FILE' : '.travis.yml'

}

},

{

'cb' : update_github,

'args': {

'GITHUB_REPO' : 'atodorov/Markdown-No-Lazy-Code-Extension',

'GITHUB_BRANCH' : 'master',

'GITHUB_FILE' : '.travis.yml'

}

},

{

'cb' : update_github,

'args': {

'GITHUB_REPO' : 'atodorov/Markdown-No-Lazy-BlockQuote-Extension',

'GITHUB_BRANCH' : 'master',

'GITHUB_FILE' : '.travis.yml'

}

},

],

}

# check the RSS to see if we have something new

monitor_rss(config)

There are comments.

Commit a file with the GitHub API and Python

How do you commit changes to a file using the GitHub API ? I've found this post by Levi Botelho which explains the necessary steps but without any code. So I've used it and created a Python example.

I've rearranged the steps so that all write operations follow after a certain section in the code and also added an intermediate section which creates the updated content based on what is available in the repository.

I'm just appending

versions of Markdown to the .travis.yml (I will explain why in my next post)

and this is hard-coded for the sake of example. All content related operations

are also based on the GitHub API because I want to be independent of the source

code being around when I push this script to a hosting provider.

I've tested this script against itself. In the commits log you can find the Automatic update to Markdown-X.Y messages. These are from the script. Also notice the Merge remote-tracking branch 'origin/master' messages, these appeared when I pulled to my local copy. I believe the reason for this is that I have some dangling trees and/or commits from the time I was still experimenting with a broken script. I've tested on another clean repository and there are no such merges.

IMPORTANT

For this to work you need to properly authenticate with GitHub. I've crated a new token at https://github.com/settings/tokens with the public_repo permission and that works for me.

There are comments.

Blog Migration: from Octopress to Pelican

Finally I have migrated this blog from Octopress to Pelican. I am using the clean-blog theme with modifications.

See the pelican_migration branch for technical details. Here's the summary:

- I removed pretty much everything that Octopress uses, only left the content files;

- I've added my own CSS overrides;

- I had several Octopress pages, these were merged and converted into blog posts;

- In Octopress all titles had quotes, which were removed using sed;

- Octopress categories were converted to Pelican tags and removed quotes around them, again using sed;

- Manually updated Octopress's

{% codeblock %}and{% blockquote %}tags to match Pelican syntax. This is the biggest content change; - I was trying to keep as much as the original URLs as possible.

ARTICLE_URL,ARTICLE_SAVE_AS,TAG_URL,TAG_SAVE_AS,FEED_ALL_ATOMandTAG_FEED_ATOMare the relevant settings. For 50+ posts I had to manually specify theSlug:variable so that they match existing Octopress URLs. Verifying the resulting names was as simple as diffing the file listings from both Octopress and Pelican. NOTE: The fedora.planet tag changed its URL because there's no way to assign slugs for tags in Pelican. The new URL is missing the dot! Luckily I make use of this only in one place which was manually updated!

I've also found a few bugs and missing functionality:

- There's no

rake new_postcounterpart in Pelican. See Issue 1410 and commit 6552f6f. Thanks Kevin Decherf; As far as I can tell the preview server doesn't regenerate files automatically.Domake regenerateandmake servein two separate shells. Thanks Kevin Decherf;Pelican will merge code blocks and quotes which follow one after another but are separated with a newline in Markdown. This makes it visually impossible to distinguish code from two files, or quotes from two people, which are published without any other comments in between.See Markdown-No-Lazy-BlockQuote-Extension and Markdown-No-Lazy-Code-Extension;The syntax doesn't allow to specify filename or a quote title when publishing code blocks and quotes. Octopress did that easily. I will be happy with something likeSee PR #445;:::python settings.py.- There's no way to specify slugs for tag URLs in order to keep compatibility with existing URLs, see Issue 1873.

I will be filling Issues and pull requests for both Pelican and the clear-blog theme in the next few days so stay tuned!

UPDATED 2015-11-26: added links to issues, pull requests and custom extensions.

There are comments.

Call for Ideas: Graphical Test Coverage Reports

If you are working with Python and writing unit tests chances are you are familiar with the coverage reporting tool. However there are testing scenarios in which we either don't use unit tests or maybe execute different code paths(test cases) independent of each other.

For example, this is the case with installation testing in Fedora. Because anaconda - the installer is very complex the easiest way is to test it live, not with unit tests. Even though we can get a coverage report (anaconda is written in Python) it reflects only the test case it was collected from.

coverage combine can be used to combine several data files and produce an aggregate

report. This can tell you how much test coverage you have across all your tests.

As far as I can tell Python's coverage doesn't tell you how many times a particular line of code has been executed. It also doesn't tell you which test cases executed a particular line (see PR #59). In the Fedora example, I have the feeling many of our tests are touching the same code base and not contributing that much to the overall test coverage. So I started working on these items.

I imagine a script which will read coverage data from several test executions (preferably in JSON format, PR #60) and produce a graphical report similar to what GitHub does for your commit activity.

See an example here!

The example uses darker colors to indicate more line executions, lighter for less executions. Check the HTML for the actual numbers b/c there are no hints yet. The input JSON files are here and the script to generate the above HTML is at GitHub.

Now I need your ideas and comments!

What kinds of coverage reports are you using in your job ? How do you generate them ? How do they look like ?

There are comments.

DEVit Conf 2015 Impressions

It's been a busy week after DEVit conf took place in Thessaloniki. Here are my impressions.

Sessions

I've started the day with the session called "Crack, Train, Fix, Release" by Chris Heilmann. While it was very interesting for some unknown reason I was expecting a talk more closely related to software testing. Unfortunately at the same time in the other room was a talk called "Integration Testing from the Trenches" by Nicolas Frankel which I missed.

At the end Chris answered the question "What to do about old versions of IE ?". And the answer pretty much was "Don't try to support everything, leave them with basic functionality so that users can achieve what they came for on your website. Don't put nice buttons b/c IE 6 users are not used to nice things and they get confused."

If you remember I had a similar question to Jeremy Keith at Bulgaria Web Summit last month and the answer was similar:

Q: Which one is Jeremy's favorite device/browser to develop for.

A: Your approach is wrong and instead we should be thinking in terms of what features are essential or non-essential for our websites and develop around features (if supported, if not supported) not around browsers!

Btw I did ask Chris if he knows Jeremy and he does.

After the coffee break there was "JavaScript ♥ Unicode" by Mathias Bynens which I saw last year at How Camp in Veliko Tarnovo so I just stopped by to say hi and went to listen to "The future of responsive web design: web component queries" by Nikos Zinas. As far as I understood Nikos is a local rock-star developer. I'm not much into web development but the opportunity to create your own HTML components (tags) looks very promising. I guess there will be more business coming for Telerik :).

I wanted to listen to "Live Productive Coder" by Heinz Kabutz but that one started in Greek so I switched the room for "iOS real time content modifications using websockets" by Benny Weingarten-Gabbay.

After lunch I went straight for "Introduction to Docker: What is it and why should I care?" by Ian Miell which IMO was the most interesting talk of the day. It wasn't very technical but managed to clear some of the mysticism around Docker and what it actually is. I tried to grab a few minutes of Ian's time and we found topics of common interest to talk about (Project Atomic anyone?) but later failed to find him and continue the talk. I guess I'll have to follow online.

Tim Perry with "Your Web Stack Would Betray You In An Instant" made a great show. The room was packed, I myself was actually standing the whole time. He described a series of failures across the entire web development stack which gave developers hard times patching and upgrading their services. The lesson: everything fails, be prepared!

The last talk I visited was "GitHub Automation" by Forbes Lindesay. It was more of an inspirational talk, rather than technical one. GitHub provides cool API so why not use it?

Organization

From what I know this is the first year of DEVit. For a first timer the team did great! I particularly liked the two coffee breaks before lunch and in the early afternoon and the sponsors pitches in between the main talks.

All talks were recorded but I have no idea what's happening with the videos!

I will definitely make a point of visiting Thessaloniki more often and follow the local IT and start-up scenes there. And tonight is Silicon Drinkabout which will be the official after party of DigitalK in Sofia.

There are comments.

Free Software Testing Books

There's a huge list of free books on the topic of software testing. This will definitely be my summer reading list. I hope you find it helpful.

200 Graduation Theses About Software Testing

The guys from QAHelp have compiled a list of 200 graduation theses from various universities which are freely accessible online. The list can be found here.

There are comments.

Videos from Bulgaria Web Summit 2015

Bulgaria Web Summit 2015 is over. The event was incredible and I had a lot of fun moderating the main room. We had many people coming from other countries and I've made lots of new friends. Thank you to everyone who attended!

You can find video recordings of all talks in the main room (in order of appearance) below:

Hope to see you next time in Sofia!

Mean while I learned about DEVit in Thessaloniki in May and another one in Zagreb in October. See you there :)

There are comments.

Speeding Up Celery Backends, Part 3

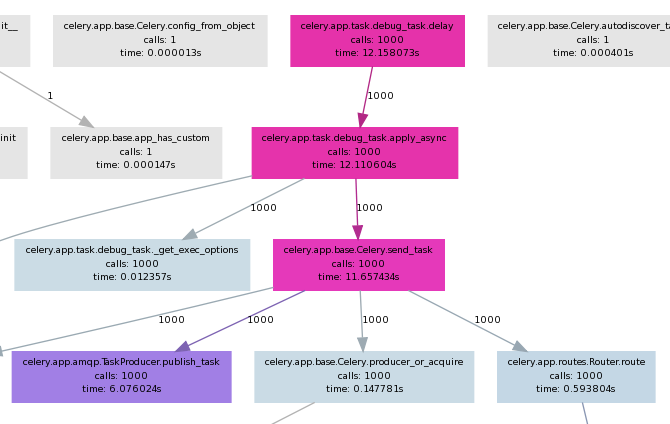

In the second part of this article we've seen how slow Celery actually is. Now let's explore what happens inside and see if we can't speed things up.

I've used pycallgraph to create call graph visualizations of my application. It has the nice feature to also show execution time and use different colors for fast and slow operations.

Full command line is:

pycallgraph -v --stdlib --include ... graphviz -o calls.png -- ./manage.py celery_load_test

where the --include is used to limit the graph to a particular Python module(s).

General findings

- The first four calls is where most of the time is spent as seen on the picture.

- As it seems most of the slow down comes from Celery itself, not the underlying messaging transport Kombu (not shown on picture)

celery.app.amqp.TaskProducer.publish_tasktakes half of the execution time ofcelery.app.base.Celery.send_taskcelery.app.task.Task.delaydirectly executes.apply_asyncand can be skipped if one rewrites the code.

More findings

In celery.app.base.Celery.send_task there is this block of code:

349 with self.producer_or_acquire(producer) as P:

350 self.backend.on_task_call(P, task_id)

351 task_id = P.publish_task(

352 name, args, kwargs, countdown=countdown, eta=eta,

353 task_id=task_id, expires=expires,

354 callbacks=maybe_list(link), errbacks=maybe_list(link_error),

355 reply_to=reply_to or self.oid, **options

356 )

producer is always None because delay() doesn't pass it as argument.

I've tried passing it explicitly to apply_async() as so:

from djapp.celery import *

# app = debug_task._get_app() # if not defined in djapp.celery

producer = app.amqp.producer_pool.acquire(block=True)

debug_task.apply_async(producer=producer)

However this doesn't speedup anything. If we replace the above code block like this:

349 with producer as P:

it blows up on the second iteration because producer and its channel is already None !?!

If you are unfamiliar with the with statement in Python please read

this article. In short the with statement is

a compact way of writing try/finally. The underlying kombu.messaging.Producer class does a

self.release() on exit of the with statement.

I also tried killing the with statement and using producer directly but with limited success. While it was not released(was non None) on subsequent iterations the memory usage grew much more and there wasn't any performance boost.

Conclusion

The with statement is used throughout both Celery and Kombu and I'm not at all sure if there's a mechanism for keep-alive connections. My time constraints are limited and I'll probably not spend anymore time on this problem soon.

Since my use case involves task producer and consumers on localhost I'll try to workaround the current limitations by using Kombu directly (see this gist) with a transport that uses either a UNIX domain socket or a name pipe (FIFO) file.

There are comments.

Speeding up Celery Backends, Part 2

In the first part of this post I looked at a few celery backends and discovered they didn't meet my needs. Why is the Celery stack slow? How slow is it actually?

How slow is Celery in practice

- Queue: 500`000 msg/sec

- Kombu: 14`000 msg/sec

- Celery: 2`000 msg/sec

Detailed test description

There are three main components of the Celery stack:

- Celery itself

- Kombu which handles the transport layer

- Python Queue()'s underlying everything

Using the Queue and Kombu tests run for 1 000 000 messages I got the following results:

- Raw Python Queue: Msgs per sec: 500`000

- Raw Kombu without Celery where

kombu/utils/__init__.py:uuid()is set to return 0- with json serializer: Msgs per sec: 5`988

- with pickle serializer: Msgs per sec: 12`820

- with the custom mem_serializer from part 1: Msgs per sec: 14`492

Note: when the test is executed with 100K messages mem_serializer yielded 25`000 msg/sec then the performance is saturated. I've observed similar behavior with raw Python Queue()'s. I saw some cache buffers being managed internally to avoid OOM exceptions. This is probably the main reason performance becomes saturated over a longer execution.

- Using celery_load_test.py modified to loop 1 000 000 times I got 1908.0 tasks created per sec.

Another interesting this worth outlining - in the kombu test there are these lines:

with producers[connection].acquire(block=True) as producer:

for j in range(1000000):

If we swap them the performance drops down to 3875 msg/sec which is comparable with the

Celery results. Indeed inside Celery there's the same with producer.acquire(block=True)

construct which is executed every time a new task is published. Next I will be looking

into this to figure out exactly where the slowliness comes from.

There are comments.

Speeding up Celery Backends, Part 1

I'm working on an application which fires a lot of Celery tasks - the more the better! Unfortunately Celery backends seem to be rather slow :(. Using the celery_load_test.py command for Django I was able to capture some metrics:

- Amazon SQS backend: 2 or 3 tasks/sec

- Filesystem backend: 2000 - 2500 tasks/sec

- Memory backend: around 3000 tasks/sec

Not bad but I need in the order of 10000 tasks created per sec! The other noticeable thing is that memory backend isn't much faster compared to the filesystem one! NB: all of these backends actually come from the kombu package.

Why is Celery slow ?

Using celery_load_test.py together with

cProfile I

was able to pin-point some problematic areas:

-

kombu/transports/virtual/__init__.py: class Channel.basic_publish() - does self.encode_body() into base64 encoded string. Fixed with custom transport backend I called fastmemory which redefines the body_encoding property:@cached_property def body_encoding(self): return None -

Celery uses json or pickle (or other) serializers to serialize the data. While json yields between 2000-3000 tasks/sec, pickle does around 3500 tasks/sec. Replacing with a custom serializer which just returns the objects (since we read/write from/to memory) yields about 4000 tasks/sec tops:

from kombu.serialization import register def loads(s): return s def dumps(s): return s register('mem_serializer', dumps, loads, content_type='application/x-memory', content_encoding='binary') -

kombu/utils/__init__.py: def uuid() - generates random unique identifiers which is a slow operation. Replacing it withreturn "00000000"boosts performance to 7000 tasks/sec.

It's clear that a constant UUID is not of any practical use but serves well to illustrate how much does this function affect performance.

Note:

Subsequent executions of celery_load_test seem to report degraded performance even with

the most optimized transport backend. I'm not sure why is this. One possibility is the random

UUID usage in other parts of the Celery/Kombu stack which drains entropy on the system and

generating more random numbers becomes slower. If you know better please tell me!

I will be looking for a better understanding of these IDs in Celery and hope to be able to produce a faster uuid() function. Then I'll be exploring the transport stack even more in order to reach the goal of 10000 tasks/sec. If you have any suggestions or pointers please share them in the comments.

There are comments.

Tip: Collecting Emails - Webhooks for UserVoice and WordPress.com

In my practice I like to use webhooks and integrate auxiliary services with my internal processes or businesses. One of these is the collection of emails. In this short article I'll show you an example of how to collect email addresses from the comments of a WordPress.com blog and the UserVoice feedback/ticketing system.

WordPress.com

For your WordPress.com blog from the Admin Dashboard navigate to

Settings -> Webhooks and add a new webhook with action comment_post

and fields comment_author, comment_author_email. A simple

Django view that handles the input is shown below.

@csrf_exempt

def hook_wp_comment_post(request):

if not request.POST:

return HttpResponse("Not a POST\n", content_type='text/plain', status=403)

hook = request.POST.get("hook", "")

if hook != "comment_post":

return HttpResponse("Go away\n", content_type='text/plain', status=403)

name = request.POST.get("comment_author", "")

first_name = name.split(' ')[0]

last_name = ' '.join(name.split(' ')[1:])

details = {

'first_name' : first_name,

'last_name' : last_name,

'email' : request.POST.get("comment_author_email", ""),

}

store_user_details(details)

return HttpResponse("OK\n", content_type='text/plain', status=200)

UserVoice

For UserVoice navigate to Admin Dashboard -> Settings -> Integrations -> Service Hooks and add a custom web hook for the New Ticket notification. Then use a sample code like that:

@csrf_exempt

def hook_uservoice_new_ticket(request):

if not request.POST:

return HttpResponse("Not a POST\n", content_type='text/plain', status=403)

data = request.POST.get("data", "")

event = request.POST.get("event", "")

if event != "new_ticket":

return HttpResponse("Go away\n", content_type='text/plain', status=403)

data = json.loads(data)

details = {

'email' : data['ticket']['contact']['email'],

}

store_user_details(details)

return HttpResponse("OK\n", content_type='text/plain', status=200)

store_user_details() is a function which handles the email/name received in the webhook,

possibly adding them to a database or anything else.

I find webhooks extremely easy to setup and develop and used them whenever they are supported by the service provider. What other services do you use webhooks for? Please share your story in the comments.

There are comments.

OpenSource.com article - 10 steps to migrate your closed software to open source

Difio is a Django based application that keeps track of packages and tells you when they change. Difio was created as closed software, then I decided to migrate it to open source ....

Read more at OpenSource.com

Btw I'm wondering if Telerik will share their experience opening up the core of their Kendo UI framework on the webinar tomorrow.

There are comments.

Howto: Django Forms with Dynamic Fields

Last week at HackFMI 3.0 one team had to display a form which presented multiple choice selection for filtering, where the filter keys are read from the database. They've solved the problem by simply building up the HTML required inside the view. I was wondering if this can be done with forms.

>>> from django import forms

>>>

>>> class MyForm(forms.Form):

... pass

...

>>> print(MyForm())

>>> MyForm.__dict__['base_fields']['name'] = forms.CharField()

>>> MyForm.__dict__['base_fields']['age'] = forms.IntegerField()

>>> print(MyForm())

<tr><th><label for="id_name">Name:</label></th><td><input id="id_name" name="name" type="text" /></td></tr>

<tr><th><label for="id_age">Age:</label></th><td><input id="id_age" name="age" type="number" /></td></tr>

>>>

>>>

>>> POST = {'name' : 'Alex', 'age' : 0}

>>> f = MyForm(POST)

>>> print(f)

<tr><th><label for="id_name">Name:</label></th><td><input id="id_name" name="name" type="text" value="Alex" /></td></tr>

<tr><th><label for="id_age">Age:</label></th><td><input id="id_age" name="age" type="number" value="0" /></td></tr>

>>> f.is_valid()

True

>>> f.is_bound

True

>>> f.errors

{}

>>> f.cleaned_data

{'age': 0, 'name': u'Alex'}

So if we were to query all names from the database then we could build up the class by adding a BooleanField using the object primary key as the name.

>>> MyForm.__dict__['base_fields']['123'] = forms.BooleanField()

>>> print(MyForm())

<tr><th><label for="id_123">123:</label></th><td><input id="id_123" name="123" type="checkbox" /></td></tr>

>>> f = MyForm({'123' : True})

>>> f.is_valid()

True

>>> f.cleaned_data

{'123': True}

There are comments.

Spoiler: How to Open Source Existing Proprietary Code in 10 Steps

We've heard about companies opening up their proprietary software products, this is hardly news nowadays. But have you wondered what it is like to migrate production software from closed to open source? I would like to share my own experience about going open source as seen from behind the keyboard.

Difio was recently open sourced and the steps to go through were:

- Simplify - remove everything that can be deleted

- Create self contained modules aka re-organize the file structure

- Separate internal from external modules

- Refactor the existing code

- Select license and update copyright

- Update 3rd party dependencies to latest versions and add requirements.txt

- Add README and verbose settings example

- Split difio/ into its own git repository

- Test stand alone deployments on fresh environment

- Announce

Do you want to know more? Use the comments and tell me what exactly! I'm writing a longer version of this article so stay tuned!

There are comments.

Beware of Django default Model Field Option When Using datetime.now()

Beware if you are using code like this:

models.DateTimeField(default=datetime.now())

i.e. passing a function return value as the default option for a model field in Django. In some cases the value will be calculated once when the application starts or the module is imported and will not be updated later. The most common scenario is DateTime fields which default to now(). The correct way is to use a callable:

models.DateTimeField(default=datetime.now)

I've hit this issue on a low volume application which uses cron to collect its own metrics by calling an internal URL. The app was running as WSGI app and I wondered why I got records with duplicate dates in the DB. A more detailed (correct) example follows:

def _strftime():

return datetime.now().strftime('%Y-%m-%d')

class Metrics(models.Model):

key = models.IntegerField(db_index=True)

value = models.FloatField()

added_on = models.DateTimeField(db_index=True, default=datetime.now)

added_on_as_text = models.CharField(max_length=16, default=_strftime)

Difio also had the same bug but didn't exhibit the problem because all objects with default date-time values were created on the backend nodes which get updated and restarted very often.

For more info read this

blog.

For general info on Django, please check out

Django books on Amazon.

There are comments.

Page 1 / 2